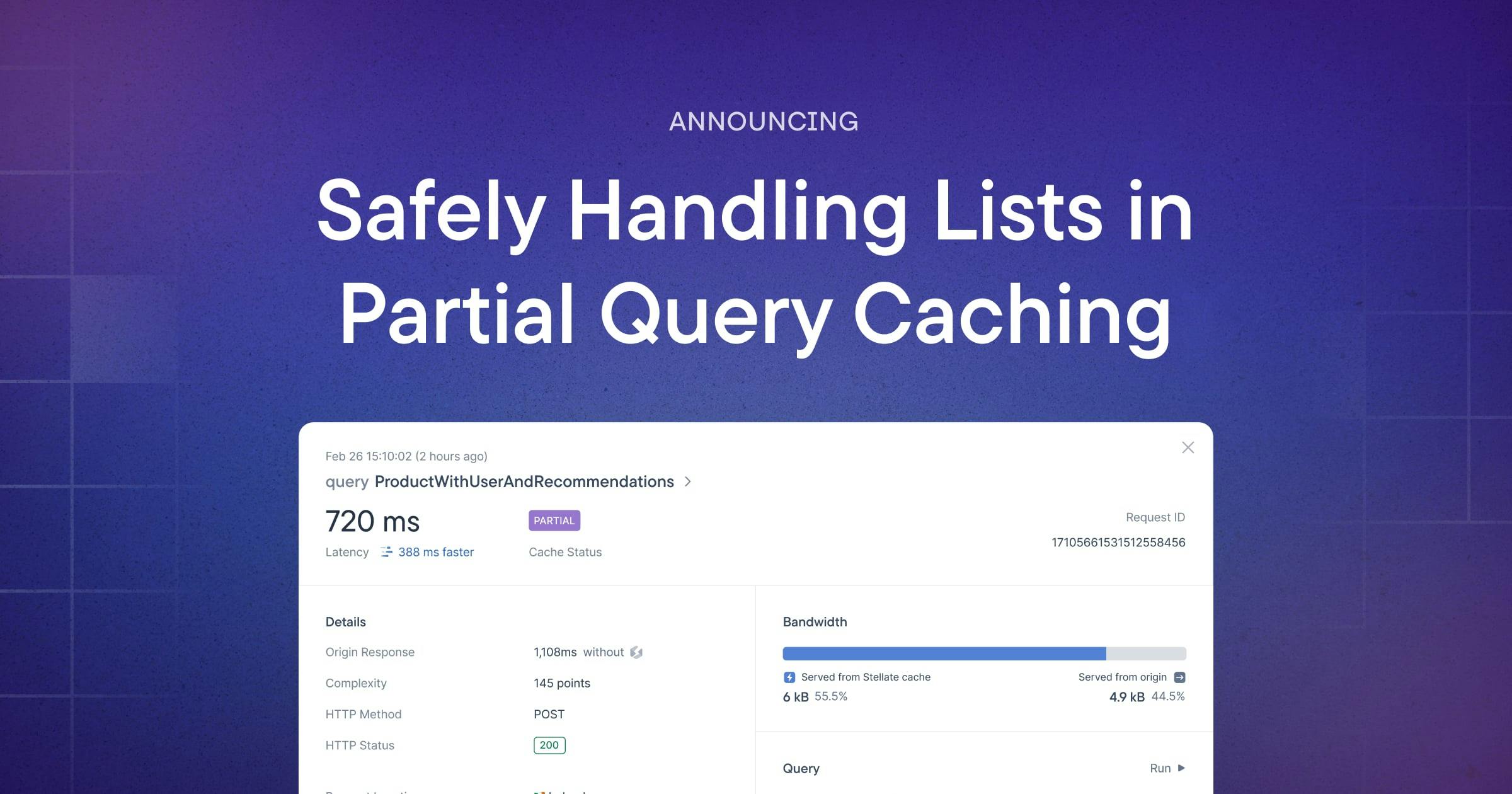

The primary goal of partial query caching (PQC) is to maximize the amount of a request response that is cacheable. We accomplish this by splitting a request into cacheable and non-cacheable parts. We make this delineation using the service’s cache config in combination with GraphQL’s type system. The cacheable parts are read from the cache and the non-cacheable parts are read from the origin. The two request responses are combined and then returned to the client.

As an example, consider a product type

type Product {id: ID!name: String!hasStock: Boolean!}

In the cache config, we’ve opted to cache the product’s name but not its in-stock status.

const config: Config = {config: {nonCacheable: ['Product.hasStock',],partialQueryCaching: { enabled: true },rules: [{types: ['Product'],maxAge: 604800,description: 'Cache product names for a week',},},}

Based on the config, determining what to read from the cache and what to read from the origin is simple.

# Cache read: name is able to be read from the cachequery {product {idname}}

# Returns{ "id": "shoe-1", "name": "Blue Sneaker"}

# Origin: hasStock is never cached and always fetched from the originquery {product {idhasStock}}

# Returns{ "id": "shoe-1", hasStock: true }

When the responses are returned, thanks to our ability to confidently reason about the type’s shape, the two documents are combined and a product similar to the psuedo-response below is returned to the client

{ "id": "shoe-1", "name": "Blue Sneaker", hasStock: true }

This is simple enough when fetching a single product. Where it gets more tricky is with many products in the form of a List.

Lists in PQC

Lists are collections of objects, in our example products.

type Query {products:[Products]}

Let’s revisit our previous query for a single product, which was then split into cacheable and non-cacheable parts by PQC. This time, however, let’s attempt to fetch and return multiple products.

query {products {idname}}

# Returns[{ "id": "shoe-1", "name": "Blue Sneaker"},{ "id": "hoodie-2", "name": "Purple Hoodie"},{ "id": "socks-3", "name": "Stellate Socks"}]

query {products {idhasStock}}

# Returns{ "id": "shoe-1", hasStock: true },{ "id": "hoodie-2", hasStock: false },{ "id": "socks-3", hasStock: true }

So far, so good. But what if the next time we fetch the availability of the products, a new item is inserted at index 1.

query {products {idhasStock}}

# Returns{ "id": "shoe-1", hasStock: true },{ "id": "undies-1", hasStock: true },{ "id": "hoodie-2", hasStock: false },{ "id": "socks-3", hasStock: true }

The shape of the list fetched from the origin has evolved; the response read from the cache and response read from the origin are no longer consistent. The two responses cannot be merged without making assumptions, which has the potential to impact data correctness. Because data correctness is always prioritized, we will not attempt to merge inconsistent response bodies and instead will re-fetch the entire payload from the origin.

List Requirements

In order to take advantage of PQC with lists, two conditions must be met

The type in question has its

keyFieldsdefined. By default we assume this isid, as in our example above, but you can override it as wellThe key-field values for each item is consistent across cache entries - both their presence and order

If either requirement is not met, we simply re-request the list from your origin.

Conclusion

Partial query caching is a powerful way to optimize cache effectiveness. You can continue to model your data in the way that makes sense for your use case without being inhibited by caching considerations, while also maintaining data correctness and security.