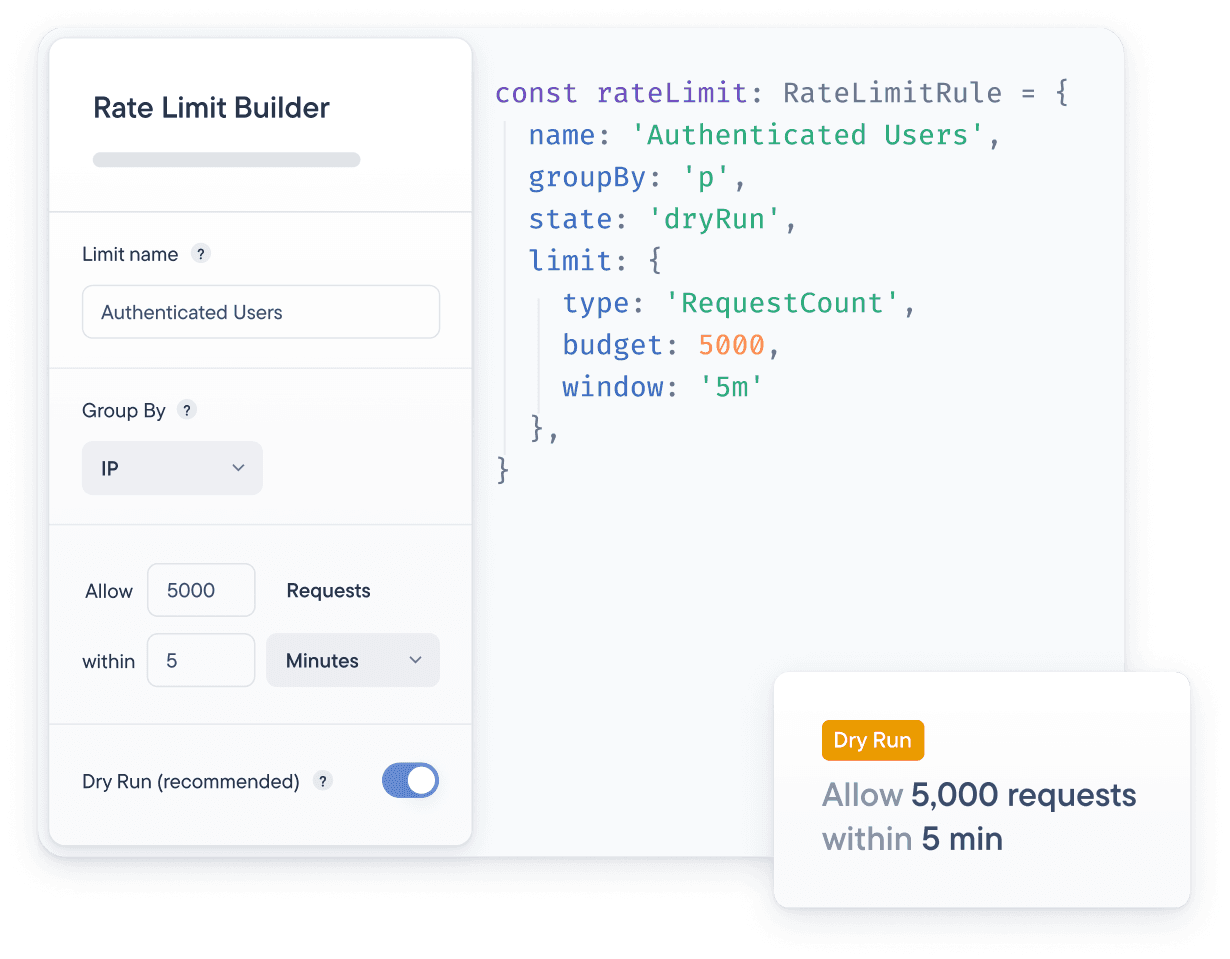

The rate limiting will track who would have been blocked but it won’t actually block anyone. That way, you can assess whether the rules you configured work the way you want them to!

Control your GraphQL API's load

One GraphQL query can be the equivalent to hundreds of REST calls, so existing request count-based rate limiting solutions aren’t good enough.

Prevent service degradation

Malicious actors, misconfigured clients, and traffic spikes can cause slowdowns or downtime for your GraphQL API.

Stop scrapers from getting your data

If you have valuable data, you can bet that somebody will attempt to scrape it for their own use.

Contain your infrastructure costs

While serverless infrastructure allows you to scale quickly, it can also get very costly if you don't have control over your traffic.

Enforce your SLA terms

Ensure your customers that have signed Service Level Agreements (SLAs) for your API stay within their traffic allowance.

Rate limit specific GraphQL operations

Limiting requests per second isn’t good enough to control the server load of a GraphQL API. Our GraphQL Rate Limiting allows you to rate limit specific operations.

Limit by HTTP requests count

Allow 3 requests / second

Limit by operation

Allow 1 addToCart mutation / second

Everything you need to protect your GraphQL API at the edge

By blocking unwanted traffic at the edge, none of it even touches your infrastructure. On top of that, our GraphQL Rate Limiting is integrated with our GraphQL Edge Caching to ensure scrapers cannot scrape your cache either.

{rateLimits: (req) => [{name: 'Request Limit',groupBy: 'ip',limit: {type: 'RequestCount',budget: 60,window: '1m',},}],}

Add request-based rate limiting for a baseline of protection on the HTTP layer. We support identifying consumers by any part of the request.

{rateLimits: (req) => [{name: 'Request Limit',groupBy: 'ip',limit: {type: 'RequestCount',budget: 60,window: '1m',},}],}

Find the right rate limits for your GraphQL API

How can you find the right request and complexity limits for your GraphQL API? We provide you with tools that help you do it simply and quickly.

Start in dry run mode

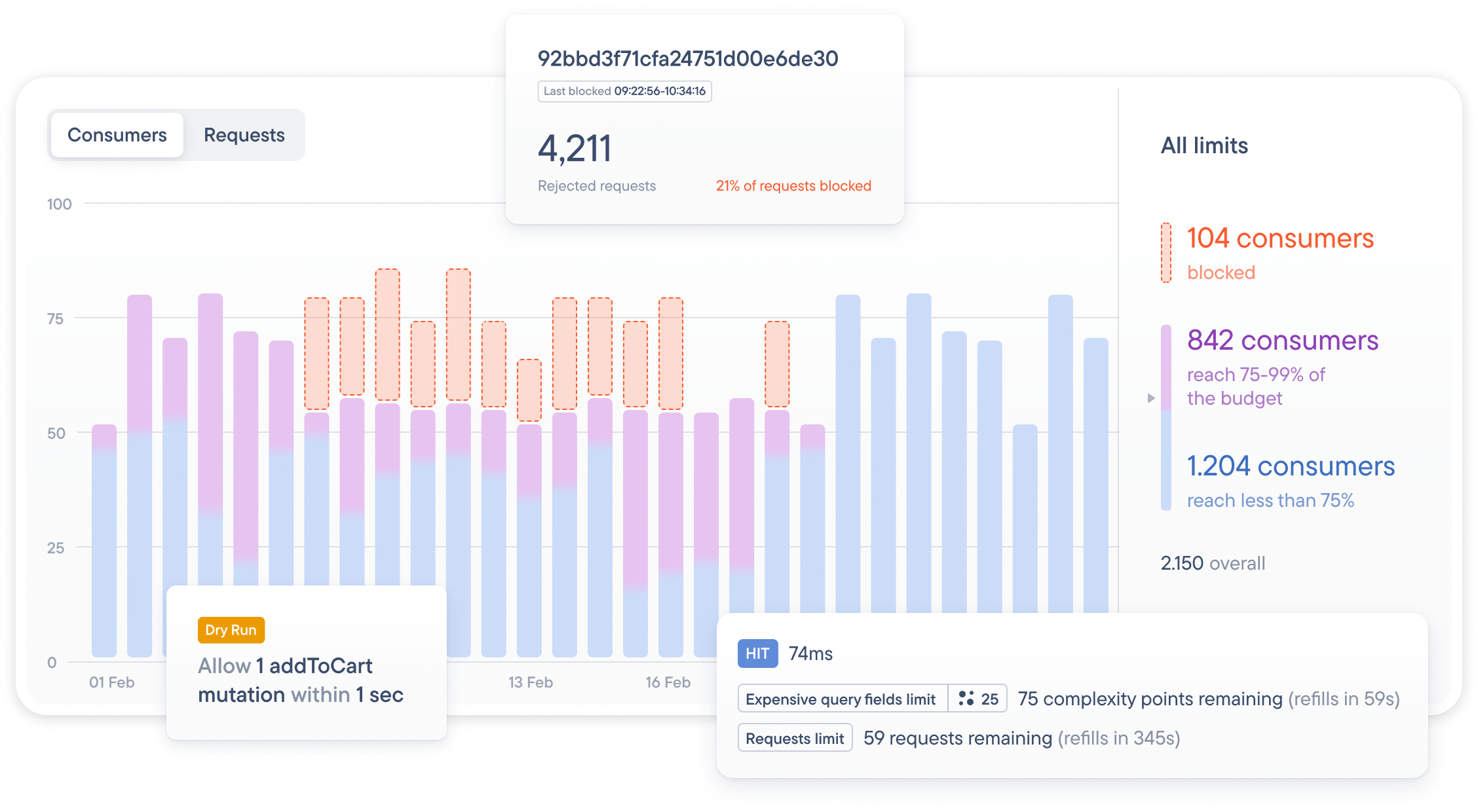

Visualize your traffic distribution

Stellate’s GraphQL Metrics tracks the distribution of requests and query complexity per timeframe per consumer so that you can visually see where to set your limits.

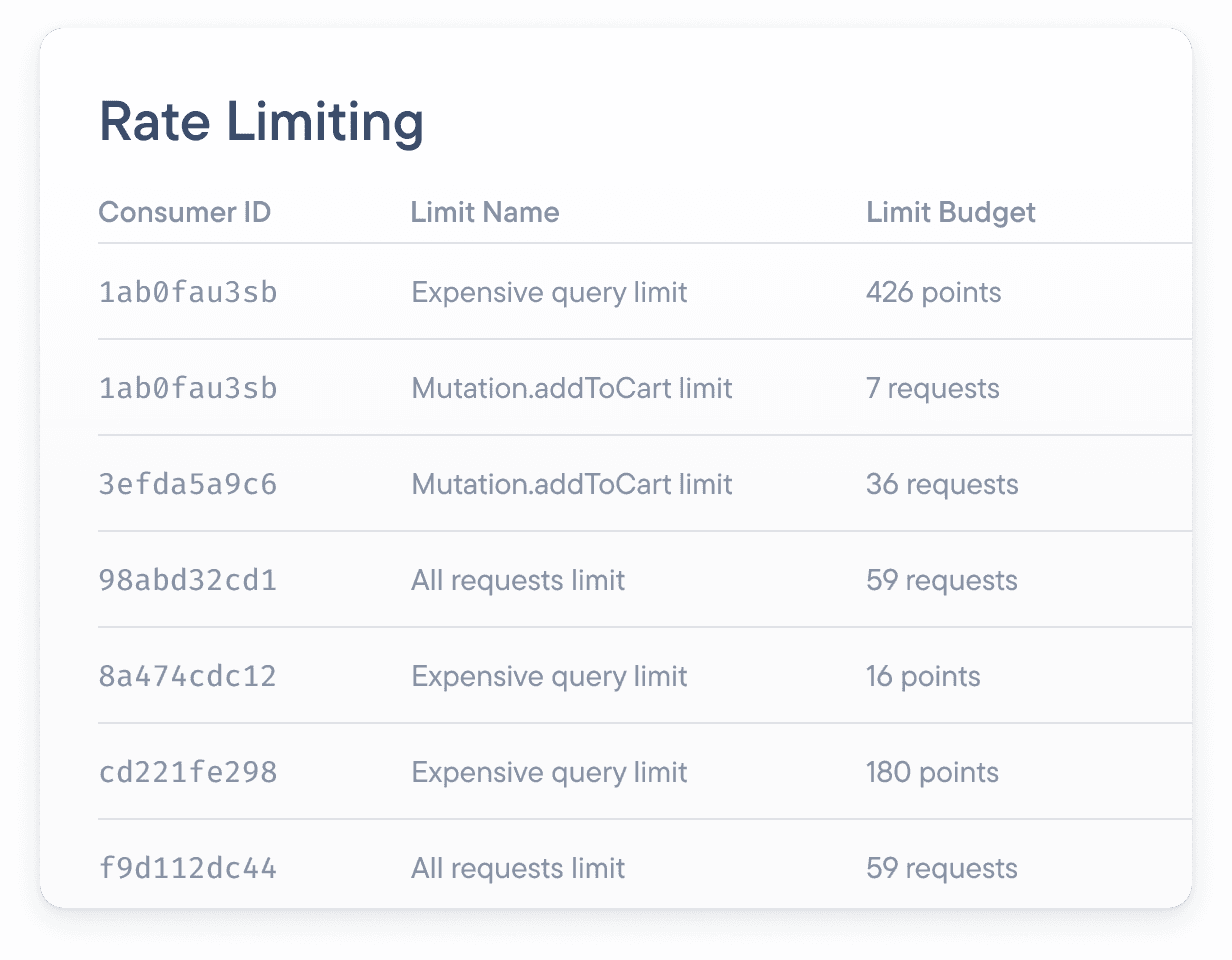

Real-time visibility into your rate limits

To make sure you’re not blocking the wrong people, Stellate’s GraphQL Metrics provide you with an overview of all the API consumers and their traffic, particularly when they were blocked.

Learn how our GraphQL complexity analysis works

The complexity score is a measure of how costly it will likely be to execute a GraphQL operations. Test it out in the GraphiQL on the Stellate dashboard!

More than just rate limiting

Make sure your metrics always look good with Stellate’s GraphQL edge caching, included by default.

Want to see how it works?

Stellate helps companies reduce their infrastructure costs by up to 40%, eliminate downtime, and improve performance.